Research

Our research focuses on developing and applying novel statistical and computational methodologies to tackle complex challenges in artificial intelligence, reinforcement learning, and dynamic real-world systems. We leverage large language models (LLMs), distributional reinforcement learning, spatio-temporal modeling, graph representation learning, and uncertainty quantification to gain deeper insights into automated statistical reasoning, optimize two-sided market equilibrium, ensure algorithmic robustness, improve off-policy evaluation, and enhance trustworthy AI.

AI for Statistics

We investigate how statistical principles can enhance the reliability and interpretability of large language models, and conversely, how LLMs can be leveraged to advance statistical methodology. Our work includes developing benchmark datasets to evaluate and improve the statistical reasoning capabilities of LLMs (StatEval) and designing agentic systems for automated statistical reasoning (StatProver).

Methodology and Theory of Reinforcement Learning

We develop rigorous methodological and theoretical foundations for reinforcement learning, focusing on distributional RL, off-policy evaluation, robustness under distribution shift, and sample-efficient learning. Our representative works include the design and analysis of quantile-based distributional reinforcement learning (NeurIPS 2020; IJCAI 2021; IEEE TNNLS 2024; ICML 2023), theoretical studies of reinforcement learning in both online and offline settings (JASA 2026; JASA2024; JMLR2023) and off-policy evaluation in confounded environments (NeurIPS2024, NeurIPS2025)

Two-Sided Market and Spatio-temporal System

We apply advanced machine learning methods to optimize complex real-world dynamic two-sided platforms. By integrating statistics, optimization, and reinforcement learning, we address challenges in ride-sharing equilibrium, recommendation systems, and spatio-temporal forecasting. Our representative work includes quantifying supply–demand equilibrium (JASA2021), spatio-temporal prediction and policy optimization (JCGS2026, ICDM2021), and constrained policy optimization for recommendation systems (KDD2022).

Uncertainty Quantification

Reliable AI requires principled uncertainty assessment. We develop statistically grounded methods for understanding uncertainty, reliability, and distributional behavior in modern learning systems. Our work includes robust statistical prediction and influence-based model assessment ICLR2025, trustworthy large language models EACL2026, and adversarial learning of reinforcement learning system ICML2023.

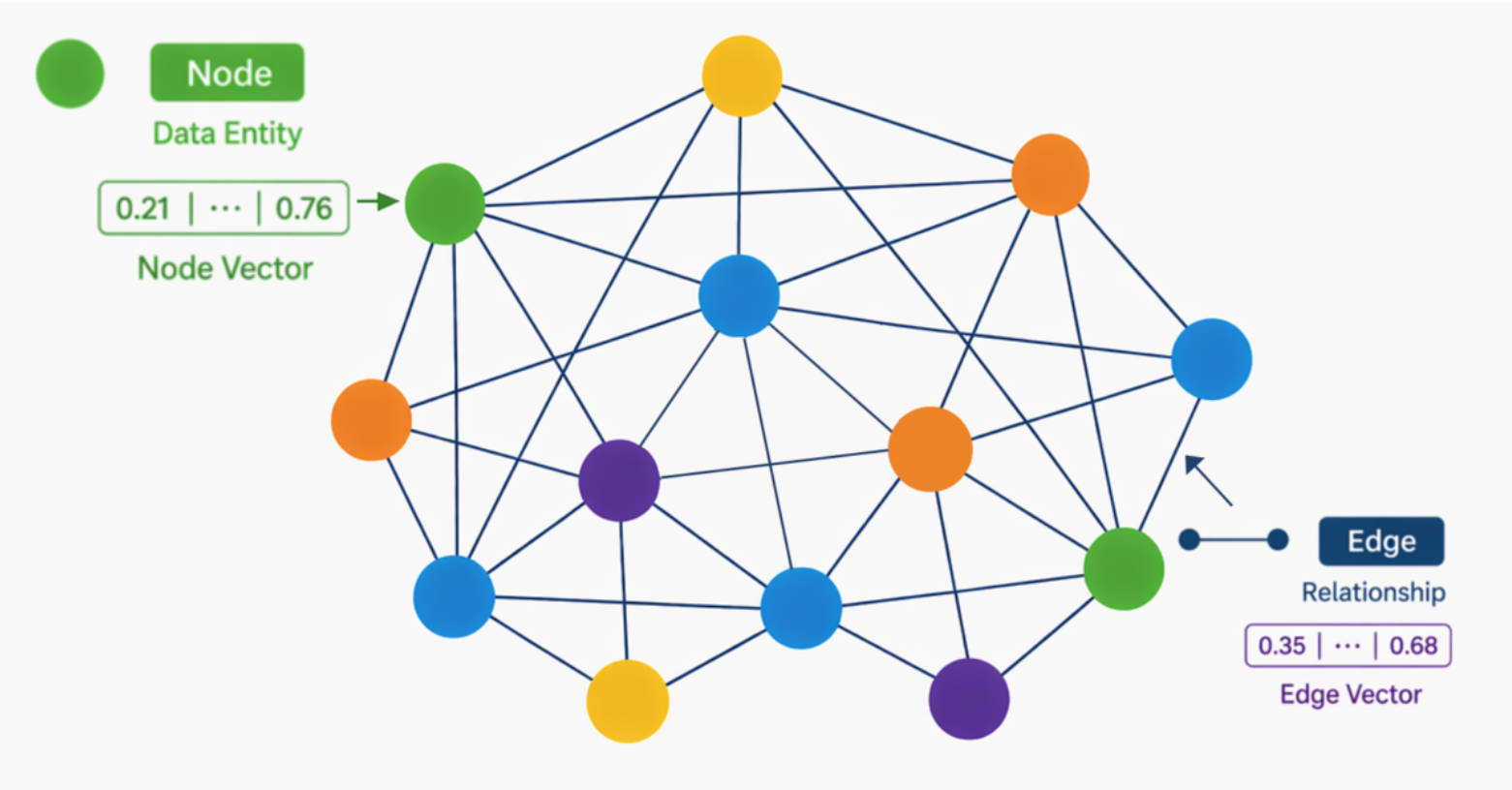

Graph Representation Learning

Graph-structured data is ubiquitous in modern machine learning, motivating the development of specialized statistical methods. We take the first step toward diffusion models for graph-structured data by proposing directional diffusion models with anisotropic, data-dependent noise, which preserve semantically meaningful representations (NeurIPS2023). In graph-based semi-supervised settings, where labeled nodes are often non-randomly missing, we further propose GNM (Graph-based joint model with Nonignorable Missingness), which jointly models the graph structure and corrects sampling bias (NeurIPS2019).